Most corporations are becoming data-driven and with it, comes new buzzwords that seem to confuse everyone.

You then see groups, organizations & meet-ups popping out – speaking about AI, Big Data or Data Science. What’s going on? Which one should you really focus on? And how do you tell if someone doesn’t really know what they are talking about?

These buzzwords don’t have to be confusing. Let us explain them in easy to understand terms.

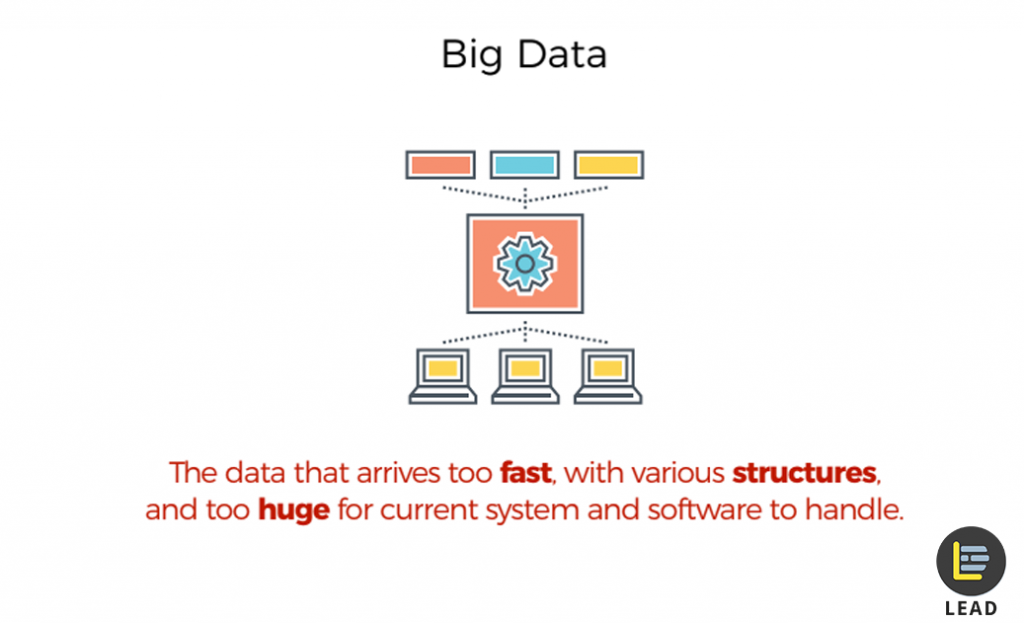

Let’s start at the beginning. What is Big Data?

A.K.A the buzzword to make any conversation appear more ‘future-edge’, Big Data is simply a term used to call massive volume of data (structured & unstructured) that is simply too big or move too fast – to be processed with common softwares, say with Microsoft Excel.

However, it’s never about how big the data is. It’s what we do with the data.

And you’ll find that it’s usually the made-for-profit companies who analyze them, in search for hidden insights that drive better business decisions.

So what is Data Science then?

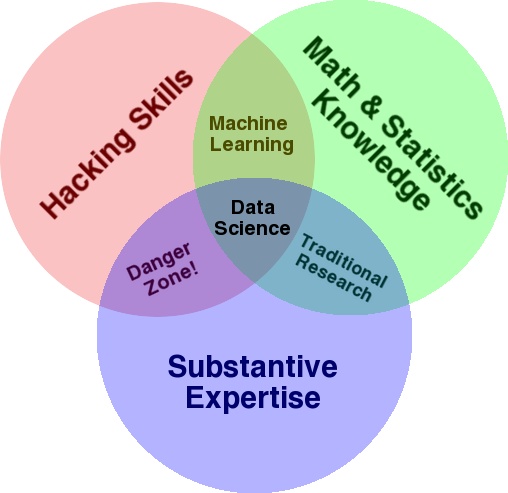

Data science is a combination of mathematics, programming, problem-solving and statistics skills – used to mine data, clean and extract valuable insights from data (structured & unstructured).

See the above Venn diagram, created by Hugh Conway. Data science is the combination of hacking skills, math & statistics knowledge, and subject expertise. If you can do all three, you’re considered a high-level expert in data science.

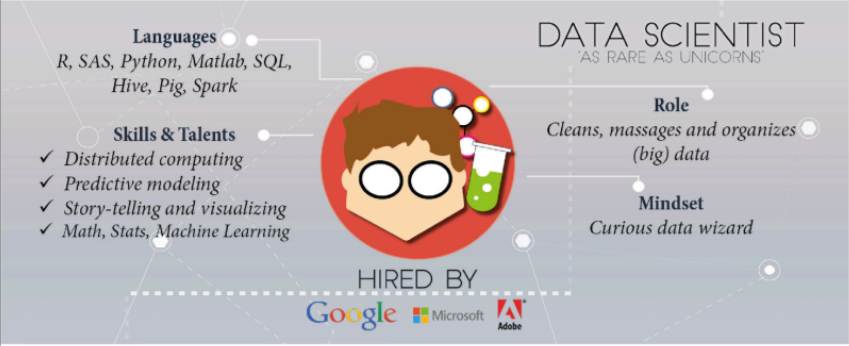

And that brings us to a Data Scientist

To be a data scientist, means you’re someone with skillsets drawn from the few domains explained earlier. Mainly mathematics, statistics, programming & computer science.

The job title, ‘data scientist’ was only coined in 2008. And Wikipedia first had a ‘data science’ page in 2012. This is also one reason why data science jobs are so new and have such strong demands.

A career-ready data scientist must have the following skillsets:

- Programming knowledge of data science tools like Python, R, and Tableau.

- Ability to work with data from all kinds of sources and code for SQL databases.

- Ability to organize and do data wrangling for large datasets to get actionable insights.

- Good communication skills

- Able to create clear and meaningful visualizations, with tools.

- Knowledge and understanding of machine learning techniques & algorithms.

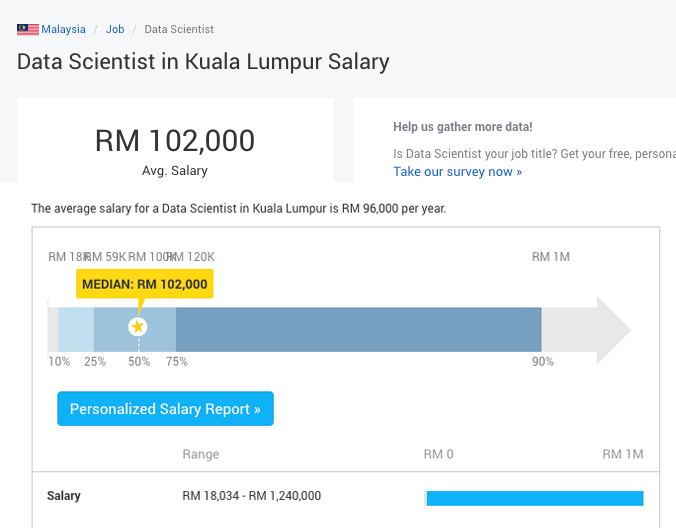

If you have read this far, it should also have occurred to you that data scientists are still a rare find. This also means that being a data scientist is a potentially lucrative career.

What is Machine Learning?

Machine learning (ML) is a subset of artificial intelligence. It’s a field where it gives computer systems the ability to learn by itself, without being explicitly programmed. For example, a computer system to progressively improve on a certain task, without someone to constantly program it.

A relatable example that you can immediately relate to is Facebook’s algorithm. Facebook’s machine learning algorithms learn about you; your habits, what you like, predicts your interest and shows you content/news updates that you most likely would engage with.

Some other machine learning examples include Netflix, who recommends movies to you and E-commerce stores who shows you products that ‘you might like’.

Then, what is Artificial Intelligence?

While machine learning refers to the practice of training a computer to progressively learn, these algorithms don’t need to understand why they self-correct and progressively improve. They were programmed to do so.

Artificial intelligence (AI) refers to machines that can perform tasks with the characteristics and mimic of human intelligence. When machine reaches a point where it can think like a human, reflect on its errors make decisions by itself – that’s artificial intelligence.

We here these two buzzwords quite often, because artificial intelligence cannot exist without machine learning but machine learning can exist without artificial intelligence.

Are People Over-Marketing AI?

There has been lots of confusion over the term AI, especially when people start putting the term ‘AI’ to products – mostly to make them sound more advanced. Most AI ‘something’ are generally just products that involve data computation – there really isn’t any intelligence behind.

Many people are simply automating basic tasks using computers and over-marketing them as AI. We can program machines to learn and process. A machine can also be taught to learn by other machines, but they cannot be taught to think for themselves, yet.

In conclusion, many so-called AI technologies are essentially machine learning systems, rather than AI.

Lastly, What Is Deep Learning?

Deep learning is a subset of machine learning. Think of it as an algorithmic technique to implement machine learning – using an algorithm structure called Artificial Neural Network (ANN), inspired after the biological neural network of the human brain. It is designed to analyze data with a logic structure similar to how a human would draw conclusions.

One great example of deep learning in action, is in May 2017, when Google deep learning project, AlphaGo, took on Ke Jie, the world’s champion GO player. AlphaGo algorithm simply learned entirely by itself from playing with many world champions through a game server, Tygem. The startling revelation was that AlphaGo was able to beat Ke Jie, by thinking in ways not thought before by a human.

Is Artificial Intelligence Going To Take Over Humans?

As many sci-fi movies you may have watched, the answer is no. Artificial intelligence will not take over humans – and their jobs.

While it’s true that AI can be smarter and more capable – usually at pattern recognition than humans, we will always still be faster to adjust and adapt than computers, because that what we engineered to do.

Instead of fearing if artificial intelligence will replace us, take the time learning to control general AI and use available technologies to help with your everyday work & life.

That is why we started a complete data science course in Data Science 360.

Perhaps in some distant future, computers will catch up to a complete human’s ability. But as of now, humans have learned and adapted a way to harness computing to augment their own native abilities. Humans are always going to stay smarter than AI.

0 Comments